Saturday, April 22, 2006

iclnet.org is back up!

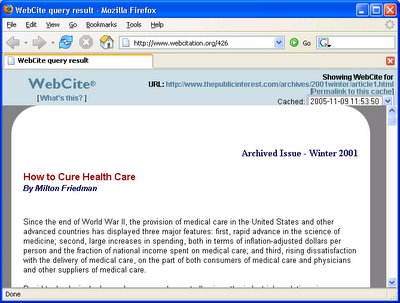

WebCite

WebCite® is an archiving system for webreferences (cited webpages and websites), which can be used by authors, editors, and publishers of scholarly papers and books, to ensure that cited webmaterial will remain available to readers in the future.The canonical reference for WebCite appears to be a 2005 article in the Journal of Medical Internet Research (JMIR) entitled “Going, Going, Still There: Using the WebCite Service to Permanently Archive Cited Web Pages” by Eysenbach and Trudel. I also found a 2003 poster about the service, so it appears to have been around for a while.

WebCite is a great idea for combating link rot, although other archiving services like Spurl.net and Hanzo:web could also be used. The advantage to WebCite is that they also provide "impact statistics" on cited web pages.

I did a search for “WebCite” in Google Scholar to see if WebCite had been widely adopted since I had never seen it used before. The only articles I could find that used the system were from JMIR which I assume has a policy that enforces use of WebCite for all their articles. Here’s an example of a WebCite URL:

http://www.webcitation.org/426

I may use WebCite the next time I write an article. The only thing I’m concerned about is the long-term survival of WebCite. For several days I was unable to access their website. If their service is not entirely stable, it makes me wonder how long they’ll be around.

Thursday, April 13, 2006

Candidacy Exam is over

Bill Arms, who served on my committee, gave a really great talk after my exam about the Cornell Web Library. He published an article about it in D-Lib Magazine (same issue as our paper on crawler activity) and has a more technical paper about it accepted to JCDL 06. The library is based on the collections from the Internet Archive, and it will give researchers the ability to perform Web research much easier than it is today. We may be able to use the library to perform some work with Warrick since it contains a number of lost websites.

URL Canonicalization

http://www.Harding.edu/USER/dsteil/www/abc/../index.htm

could be normalized to produce the canonical URL:

http://www.harding.edu/user/dsteil/www/

Search engines typically use different URL canonicalization policies which makes it difficult for Warrick to tell if URL x from MSN is the same as URL y from Google. I’ve noted some peculiarities in my blog here, here and here. Matt Cutts at Google also discussed some of their canonicalization policies back in Jan 2006.

I have not found much work in the literature about URL canonicalization/normalization. RFC 3986 has some standard normalization procedures that should be done. Pant et al. (2004) has a section about it in their chapter Crawling the Web from the book Web Dynamics. The first paper I’ve seen that deals with the issue head-on is by Sang Ho Lee et al. (2005) "On URL normalization".

I also checked Wikipedia and didn’t find anything about URL canonicalization. I decided to create a page about it and added a reference to it from the web crawler page. That was the first page I ever created on Wikipedia. Proverbs 25:2 – “It is the glory of God to conceal a thing; but the glory of kings is to search out a matter.” I’m no king, but I think God actually delights in our effort to learn about the great world He has created, and I appreciate Wikipedia providing a unique resource for us to consolidate and share our learning.

Sunday, April 02, 2006

Warrick reconstructs JaysRomanHistory.com

A couple of quotes from their site:

Welcome! This website has been put back on the Internet by friends of Jay King, the original author, who died unexpectedly in 2005. We didn't want Jay's excellent reference site to be lost forever because it no longer had a home on the Internet at SJSU.

and

This site has been selected as a valuable educational Internet resource for Discovery Channel School.

Update on 4/30/06:

This week I received an email from a Carter R., a webmaster who had used Warrick back in Jan 2006 to reconstruct two of his sites when the hard drive of his personally-maintained web server crashed:

http://dckickball.org/

http://cubanlinks.org/

He writes about using Warrick in his blog entries:

http://cubanlinks.org/blog/articles/2006/01/17/im-back-sort-of

http://cubanlinks.org/blog/articles/2006/01/20/getting-there

From Carter's blog:

One bright spot has been the recovery of my content via a tool called Warrick that uses various caching services and APIs from Google, Yahoo, the Internet Archive and others to reconstruct lost websites. So far, I’ve recovered posts for Cubanlinks going all the way back to its first post in 2002...Although Warrick wasn't able to recover all of Carter's websites, he seemed pretty thankful for what he was able to get back:

... I’ll describe the rebuilding process in more detail as I go along. The main point that I want to get across is this: BACK UP YOUR DATA!. The shock of losing a year’s worth of blood and sweat (regarding the code that powered DCKickball) still has yet to fully sink in. Don’t pull a Carter.

It’s unclear how many posts never got recovered with Warrick in the first place. Eyeballing it, I’d say I have at least 80% of my posts. And you know what? I’ll take that.These sites are definitely the first to be reconstructed with Warrick without my help.

Saturday, April 01, 2006

Wikipedia, the study aid

In preparation for the exam, I have come to realize just how invaluable Wikipedia is for a study tool. I’ve also become somewhat addicted to updating resources in my field of study. I recently updated entries on digital libraries, OAI-PMH, and digital preservation. I also found a comprehensive section on the Churches of Christ; I’m a member of this church and learned some things I never even knew about it!